Auditory Scene Analysis (see also SOUNDSCENE)

Auditory Scene Analysis (ASA) is the process by which the brain makes sense of the complex sound mixture that arrives at the ear in order to identify the sources that created the mixture. In order to recognise the source of a sound the auditory brain must first segregate the sound elements that belong to that source, before grouping them together over frequency and time, and finally assigning the resulting auditory object to a previously learned category. You can read more about this process here. The representation of an auditory object must be selective for certain sound features while being tolerant for others.

Perceptual Invariance

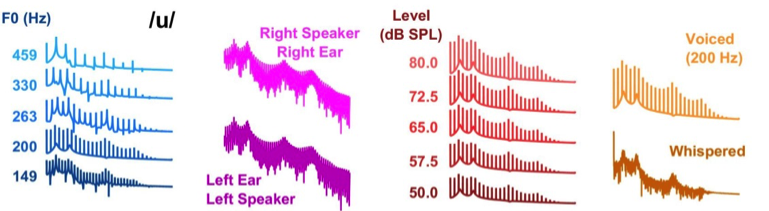

We are able to recognize sounds (such as speech) despite considerable variation in the acoustic structure. The ability to recognize objects across variation in their sensory input (such as from different viewing angles for a visual object) is known as perceptual invariance. In this study we trained ferrets to discriminate artificial vowels across a variety of identity-preserving transformations such as changes in pitch, loudness, spatial location, voiced versus whispered, and in the presence of background noise. We then asked to what extent auditory cortical responses were tolerant to these changes. Our findings suggest that auditory cortical neurons multiplex task-relevant (i.e. vowel identity) and task-orthogonal (pitch, level, etc) stimulus dimensions. You can read our preprint article here.

Auditory Scene Analysis (ASA) is the process by which the brain makes sense of the complex sound mixture that arrives at the ear in order to identify the sources that created the mixture. In order to recognise the source of a sound the auditory brain must first segregate the sound elements that belong to that source, before grouping them together over frequency and time, and finally assigning the resulting auditory object to a previously learned category. You can read more about this process here. The representation of an auditory object must be selective for certain sound features while being tolerant for others.

Perceptual Invariance

We are able to recognize sounds (such as speech) despite considerable variation in the acoustic structure. The ability to recognize objects across variation in their sensory input (such as from different viewing angles for a visual object) is known as perceptual invariance. In this study we trained ferrets to discriminate artificial vowels across a variety of identity-preserving transformations such as changes in pitch, loudness, spatial location, voiced versus whispered, and in the presence of background noise. We then asked to what extent auditory cortical responses were tolerant to these changes. Our findings suggest that auditory cortical neurons multiplex task-relevant (i.e. vowel identity) and task-orthogonal (pitch, level, etc) stimulus dimensions. You can read our preprint article here.

Auditory Space

How is space represented in the brain? The ear encodes sound frequency, not location, and therefore space has to be computed within the brain from localisation cues. Comparison of the sound at the two ears yields interaural level and timing cues (a sound on the left will arrive at the left ear sooner than the right, and, due to the shadow cast by the head, be louder in the left ear than the right). Spectral cues result from the interaction between the incoming sound wave and the complex folds of the outer ear (pinna) which impose characteristic location-dependent filtering effects. The cues to sound location are therefore inherently head-centered i.e. encoded in a co-ordinate frame defined by the head. For a neuron in the brain, that means that whenever a sound is in a particular position (say straight ahead) relative to the head the neuron will fire but that if a sound source is in one location in the world (by the door for example) the neuron will only fire when the head is facing the door. Surprisingly however, a small proportion of neurons in auditory cortex, encode the location of the sound in the world (an allocentric reference frame) - i.e. a neuron might fire whenever a sound comes from near to the door irrespective of which way the head is pointing. You can read the paper here.

We're also interested in how neural firing in auditory cortex represents spatial perception and to do this we record neural activity while subjects perform the behavioural task outlined in Wood and Bizley 2015.

How is space represented in the brain? The ear encodes sound frequency, not location, and therefore space has to be computed within the brain from localisation cues. Comparison of the sound at the two ears yields interaural level and timing cues (a sound on the left will arrive at the left ear sooner than the right, and, due to the shadow cast by the head, be louder in the left ear than the right). Spectral cues result from the interaction between the incoming sound wave and the complex folds of the outer ear (pinna) which impose characteristic location-dependent filtering effects. The cues to sound location are therefore inherently head-centered i.e. encoded in a co-ordinate frame defined by the head. For a neuron in the brain, that means that whenever a sound is in a particular position (say straight ahead) relative to the head the neuron will fire but that if a sound source is in one location in the world (by the door for example) the neuron will only fire when the head is facing the door. Surprisingly however, a small proportion of neurons in auditory cortex, encode the location of the sound in the world (an allocentric reference frame) - i.e. a neuron might fire whenever a sound comes from near to the door irrespective of which way the head is pointing. You can read the paper here.

We're also interested in how neural firing in auditory cortex represents spatial perception and to do this we record neural activity while subjects perform the behavioural task outlined in Wood and Bizley 2015.

Selective attention

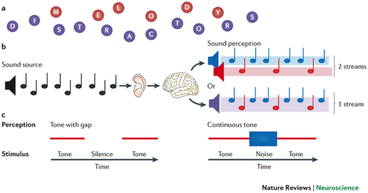

The brain is bombarded with sound. Paying attention to one sound in a mixture (for example our friend's voice in a busy restaurant) allows us to pick that sound out such that other competing sounds become background. This process of 'tuning in' to a sound of interest is accompanied by changes in the brain that allow that sound to be preferentially processed. While we can observe that these changes occur in human listeners we cannot elucidate the cellular mechanisms that allow auditory cortex selectively process sounds of interest. In this project our goal is to understand how attention shapes the responses of single neurons and neural networks within auditory cortex.

The brain is bombarded with sound. Paying attention to one sound in a mixture (for example our friend's voice in a busy restaurant) allows us to pick that sound out such that other competing sounds become background. This process of 'tuning in' to a sound of interest is accompanied by changes in the brain that allow that sound to be preferentially processed. While we can observe that these changes occur in human listeners we cannot elucidate the cellular mechanisms that allow auditory cortex selectively process sounds of interest. In this project our goal is to understand how attention shapes the responses of single neurons and neural networks within auditory cortex.

Auditory Visual integration

Why does auditory cortex respond to light?

Over the past decade there has been a paradigm shift in how we view early sensory cortex: we now know that multisensory interactions are abundant at the earliest stages of sensory processing. Despite physiological and anatomical evidence in support of crossmodal integration in sensory cortex, the role that such early integration plays in perception is much less clear. Exactly how and where crossmodal signals are linked (“bound”) to form coherent perceptual constructs is also unknown. We believe that one role for integrating visual information into auditory cortex is to support multisensory binding, and that this means that when we look at a sound of interest we are better able to pull that sound out of the mixture of background sounds.

Current research topics in the lab seek to understand how and where different sorts of multisensory integration occur within the brain.

How does vision help with Auditory Scene Analysis?

Over the past decade there has been a paradigm shift in how we view early sensory cortex: we now know that multisensory interactions are abundant at the earliest stages of sensory processing. In this research we ask whether one role for integrating visual information into auditory cortex is to support multisensory binding, and that audio-visual binding can enhance auditory scene analysis – i.e. the ability to separate an auditory scene into its component sources. We have both behavioural and neurophysiological evidence that visual information can help listeners to separate competing sounds in a mixture. You can read more about this here.

Why does auditory cortex respond to light?

Over the past decade there has been a paradigm shift in how we view early sensory cortex: we now know that multisensory interactions are abundant at the earliest stages of sensory processing. Despite physiological and anatomical evidence in support of crossmodal integration in sensory cortex, the role that such early integration plays in perception is much less clear. Exactly how and where crossmodal signals are linked (“bound”) to form coherent perceptual constructs is also unknown. We believe that one role for integrating visual information into auditory cortex is to support multisensory binding, and that this means that when we look at a sound of interest we are better able to pull that sound out of the mixture of background sounds.

Current research topics in the lab seek to understand how and where different sorts of multisensory integration occur within the brain.

How does vision help with Auditory Scene Analysis?

Over the past decade there has been a paradigm shift in how we view early sensory cortex: we now know that multisensory interactions are abundant at the earliest stages of sensory processing. In this research we ask whether one role for integrating visual information into auditory cortex is to support multisensory binding, and that audio-visual binding can enhance auditory scene analysis – i.e. the ability to separate an auditory scene into its component sources. We have both behavioural and neurophysiological evidence that visual information can help listeners to separate competing sounds in a mixture. You can read more about this here.

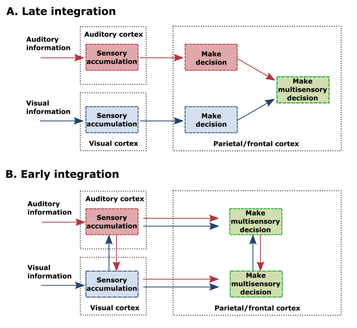

When is visual information integrated for decision-making?

Making a decision based on multisensory information likely involves weighting signals from different sensory modalities according to their reliability (in a Bayesian like manner). However, what happens when the signals within each modality already contain multisensory information? In this project we try and tease apart how early and late multisensory integration contribute to perception and decision making.

How do we direct attention to one modality over another?

While we often want to integrate what we see and hear this is not always the case – for example if we reach a complex road junction when driving we want to ignore the sound of the radio or the voice of our passenger while we look for hazards. We have designed a behavioural paradigm in which subjects are required to switch between localizing sounds in the presence of a visual distractor or visual signals in the presence of a sound. By recording from sensory cortex, parietal cortex and frontal cortex we hope to determine how attentional state modulates functional connectivity between brain regions and how this allows us to choose whether to attend to or ignore a sensory modality.

Making a decision based on multisensory information likely involves weighting signals from different sensory modalities according to their reliability (in a Bayesian like manner). However, what happens when the signals within each modality already contain multisensory information? In this project we try and tease apart how early and late multisensory integration contribute to perception and decision making.

How do we direct attention to one modality over another?

While we often want to integrate what we see and hear this is not always the case – for example if we reach a complex road junction when driving we want to ignore the sound of the radio or the voice of our passenger while we look for hazards. We have designed a behavioural paradigm in which subjects are required to switch between localizing sounds in the presence of a visual distractor or visual signals in the presence of a sound. By recording from sensory cortex, parietal cortex and frontal cortex we hope to determine how attentional state modulates functional connectivity between brain regions and how this allows us to choose whether to attend to or ignore a sensory modality.